Understanding Vector Databases and Their Relation to Large Language Models

Data organization and retrieval are essential tasks in artificial intelligence and machine learning applications. Vector databases are often used to improve the accuracy and efficiency of these procedures. Let’s define what vector databases are to understand their importance.

What is a Vector Database?

High-dimensional vector data can be stored, managed, and retrieved using vector databases, also referred to as vector search engines. They are increasingly being used in the AI community due to their capacity to manage the sophisticated data types that machine learning models produce and employ.

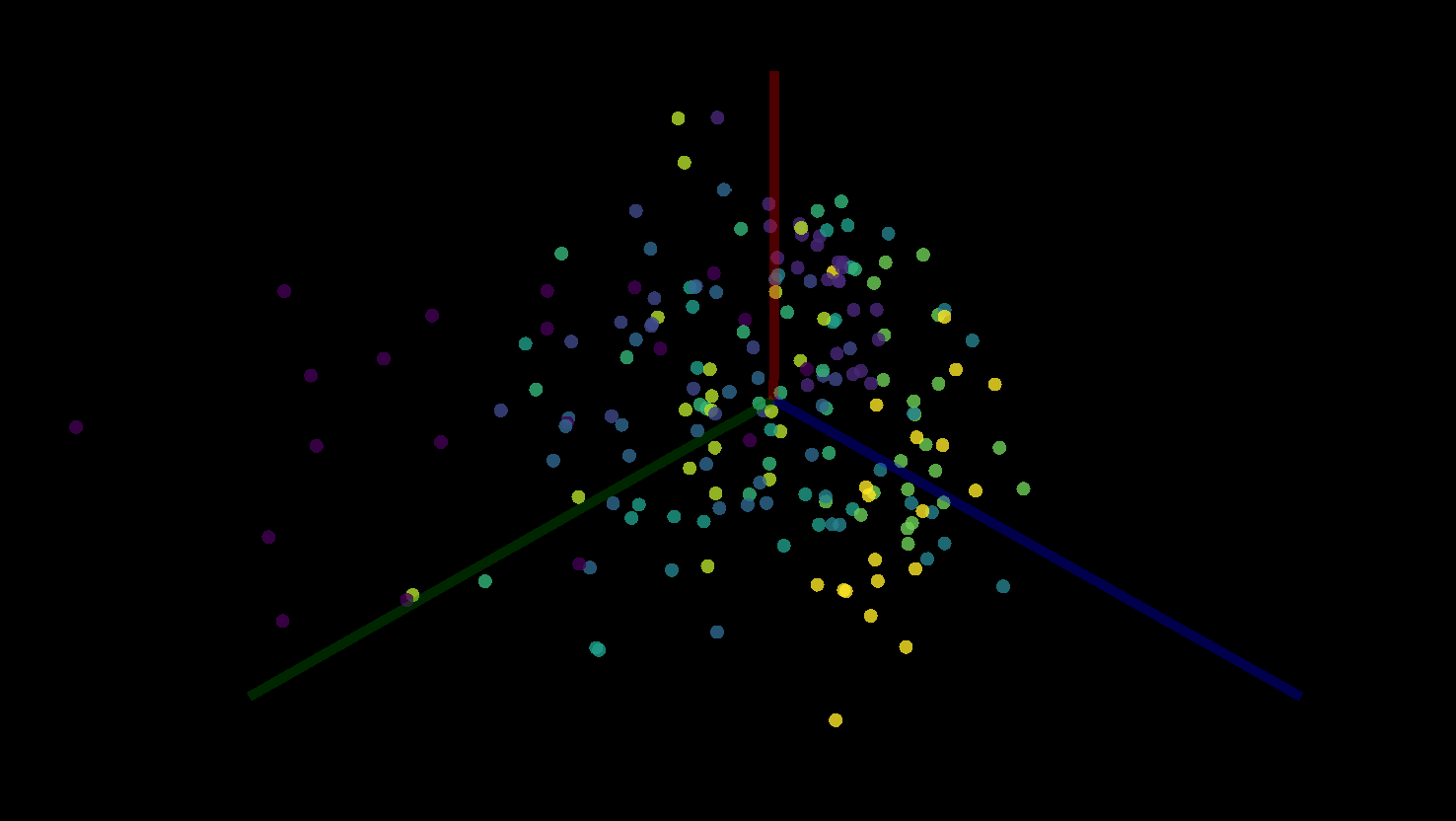

A vector is a magnitude-and-direction mathematical object that is represented as an array of numbers. Vectors are frequently used in machine learning to describe features extracted from data, such as images, text, or audio, in a way that mathematical models can comprehend and use to learn from. These high-dimensional vectors are kept up to date by a vector database, enabling quick similarity searches—the core of many AI applications.

Why Are Vector Databases Needed?

Managing structured data, such as text and numbers, is a strength of traditional and relational databases. However, they struggle when dealing with high-dimensional vector data, which is naturally unstructured.

Think about using a database search to find similar images, for instance. Since traditional databases by default cannot comprehend or measure “similarity” in the context of image data, they would struggle to complete this task. However, a vector database can quickly handle this task by contrasting the vectors used to represent the various features of each image. The distance between vectors in the high-dimensional space, which can be determined using algorithms like cosine similarity or Euclidean distance, is used by the vector database to measure similarity.

Vector Databases and Large Language Models: An Intricate Relationship

Transformator-based large language models, like OpenAI’s GPT-3.5 and 4, can generate text that resembles human speech. They learn to anticipate the next word in a sentence based on the previous context through training on enormous amounts of text data.

But how do these models relate to vector databases? First off, high-dimensional vectors can be used to represent the outputs and internal states of these models. Each word in the language model’s vocabulary, or more precisely, each token, has an embedding—a high-dimensional vector that the model has associated with the token’s usage and meaning.

These embeddings are updated as the language model analyzes the text, then combined in intricate ways to represent the sentence’s current meaning. The context of the conversation or text being processed is captured in a high-dimensional vector.

In a vector database, these vectors can be effectively stored and managed. They can be used later to pick up where a conversation left off, to look for similar contexts, or for any other purpose that calls for comprehension of the semantic content of the text.

Additionally, vector databases can be helpful when large language models need to locate comparable data points for tasks like recommendation systems, semantic search, or data analysis. These models can quickly and accurately retrieve data based on semantic similarity instead of just keyword matching by vectorizing the text and storing it in a vector database.

Conclusion

Many modern AI toolkits are incomplete without vector databases, which can handle high-dimensional data and conduct effective similarity searches. They are essential in managing the complex, vector-based data that GPT-4 and other large language models generate and use. The value of vector databases will only increase as we create more complex AI models.